… or how I ended up writing a CouchDB proof of concept app?

Once upon a time I set out on a journey to discover the NoSQL land. I’ve decided that doing simple queries wouldn’t be interesting enough. That’s why I’ve chose to create an app that would be based on some NoSQL database. The main idea was to create an app, that would dynamically update itself with geographic data flowing in. Since there are myriads of geo-data that are available on the internet, you can pick your favorite one and load them into your SQL database of choice. In my case the primary source of data was a proprietary database, or more specifically – one table in it continuously updated with new data. To make that data visible on my map I needed to: * buffer the huge amount of those records – so as not to overhoul other services with large traffic, and not to flood the frontend * convert then to my representation * display them – have presentation layer in a browser – since browser-based frontend was the easiest and fastest to develop The idea of the front-end HTML page was to show new points on the map. From the moment of opening the page records that appear in database table should be shown interactively on the screen.

Toys used

For the first step I chose to use RabbitMQ broker. A queue on the broker would receive messages – one message per database table’s row. Then I’d use some simple groovy middle ware to convert the data to appropriate format and put it onto another db – this time db specific to my app. You may ask why incorporate another database. It would be good for separating environments – assuming the original data contains some vulnerable content that should be anatomised, or we just don’t feel comfortable exposing the whole database of some XYZ-system just to have access to its one table. Since for my presentation layer I chose HTML+JS without any application server-based back-end I’ve decided on CouchDB . This seemed like a perfect match for this scenario. Why? – ease of use, REST API, with JSON responses – just great for interacting with my simple front-end. The flow of things was as shown on the image below:

Avro – for the beginning

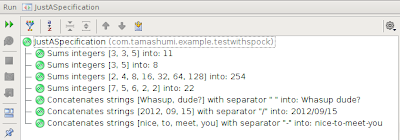

As you can see, I’ve chosen JSON as my data-format. I’ve been considering Apache Avro in the first place but using it was a real pain in the ass. Avro itself is used in Apache Hadoop as a serialization layer, so it would seem OK, but it has virtually no documentation. But once you tear through the unintuitive interface and manage to handle all those unthinkable exceptions you get a few pros for this library. It’s great in that it does not require code generation – I like it being made on the fly. It also offers sending data in binary format, which was not necessary, but never the less is a nice feature. What I certainly didn’t like about it was its orientation on the files rather than chunks of data – so it was not so obvious how should I send data through the wire. Than I found out it can produce JSON output, which would work for me, except the output could not have been parsed by other JSON libraries :) (I’ve asked on stackoverflow about that, but with no luck). If my whining haven’t put you back and still would like to see how to use Avro, try this unit test in project’s GitHub repo: AvroSimpleTest.groovy

Svenson

I’ve dropped Avro in favour of a simple JSON lib called (Svenson and that was painless. The only thing I was forced to do was create my model class in Java – the rest of the project is written in Groovy. I’ve no idea why was that necessary, and didn’t want to look into it.

RabbitMQ

Further on the way is RabbitMQ, to which records are filled by a feeding middle-ware written in Groovy. Since I use ActiveMQ on a day-to-day basis, I’ve decided to try something new. This broker is a really nice piece of software. Being written in Erlang makes it really fast. What’s more it has some extensive capabilities and is easy to approach for anyone similar with messaging (JMS and friends). For such a lightweight product it is really powerful – implements AMQP!

CouchDB

From the broker’s queue messages are again fetched by a middle-ware just to be put into CouchDB view. This database is also written in Erlang. It’s very reliable, however the way it handles refreshing view isn’t the most pleasant one – performance-wise. Word of advice – if you’re on Debian derivative, be cautious with apt-repository version. It’s rather _ancient_. Also remember to add allow_jsonp = true to you config file /opt/couchbase/etc/couchdb/local.ini. It’s not enabled by default, and not having this set would result with empty responses from the CouchDB server. The problem here is, that the browser doesn’t allow quering a web server with hostname other than the one the script originates. More on this case here. Seems like my problem could be overcame by changing url in index.html and hostname couchdb listens on to the same address. I’ve also created a view, that would expose an event by key: view code

Presenting the dots

As a back-end I’ve done some JQuery based AJAX calls – nothing too fancy. All things necessary for presentation layer are in this file.

Things to consider

Please bear in mind that this whole application is rather a playground, not a full-fledged project!! After creating all the parts I have some doubts about some architectural decisions I made. I don’t think the security have been taken into account seriously enough. Also scalability was never an issue ;-) If you have some thoughts about any of the aspects mentioned in this post, please feel free to comment or contact me directly :) And also you may try the application by yourself – it’s on GitHub.

Couple of years ago I wasn't a big fan of unit testing. It was obvious to me that well prepared unit tests are crucial though. I didn't known why exactly crucial yet then. I just felt they are important. My disliking to write automation tests was mostly related to the effort necessary to prepare them. Also a spaghetti code was easily spotted in test sources.

Couple of years ago I wasn't a big fan of unit testing. It was obvious to me that well prepared unit tests are crucial though. I didn't known why exactly crucial yet then. I just felt they are important. My disliking to write automation tests was mostly related to the effort necessary to prepare them. Also a spaghetti code was easily spotted in test sources.